IV.4.2 Definition of data

Most of the definition of data is done by functions defined in “PROJECT/DATA” sub-directory. The

main data files are directly located in “PROJECT’ main directory however. The main data files are:

- “static.rb” that manages the data for the static thermo-elastic and mechanical load cases.

- “dynam.rb” that performs the operations needed dor the post-processing of SINE load

cases (Nastran SOL 111 analyses).

- The “post.rb” script that performs final operations leading to the calculation of Strength

Ratio envelopes, and the output of GMSH visualization files. (See section IV.4.3.)

Data are interpreted by calling different ruby methods and build different kinds of objects:

- The definition of post-processing objects is done by calling functions defined in four ruby

source files called “postExtractData.rb”, “postInterfaceData.rb”, “postSandwichData.rb”

and “postStressData.rb”. Each of the four functions that is called builds a list of specific

post-processing object (instanciations of classes they derive from “GenPost”), that is

returned in an Array.

In general, the post-processing object is instanciated and initialized according to values read

from a CSV file. The CSV files are located in “PROJECT/DATA/CSV_POST” directory. In

“PROJECT/static.rb” main source file, the post-processing objects are build by the following

calls:

postList=[]

postList+=getAllStressData()

postList+=getSandwichData()

postList+=getInterfacePostData()

postList+=getStaticExtractData()

“Static” module also defines an iterator that is just a “wrapper” around another iterator defined

in “DbAndLoadCases” module. This wrapper iterator looks as follows:

def Static.loop()

DbAndLoadCases.loopOnStaticCases() do |lcName|

yield lcName

GC.start()

end

end

It is called from the main ruby file “PROJECT/static.rb” as follows:

Static.loop do |lcName|

puts lcName

postList.each do |p|

begin

p.initCalcSteps()

p.calculate("Static")

rescue Exception => x then

printf("Failed Post object %s with ID %s' n",p.to_s,p.postID())

PrjExcept.debug(x)

end

end

DbAndLoadCases.saveMosResults(postList)

DbAndLoadCases.saveSrResults(postList)

end

(Note that we could have called “DbAndLoadCases.loopOnStaticCases()” iterator

directly.)

- The definition of static load cases data is done by calling different functions of “Static”

module defined in “PROJECT/DATA/staticLoadCasesData.rb”. The main function of this

module is “Static.readDbAndLcDefs” that reads a CSV files that contains information

that allows to build FEM databases, elementary and combined static load cases. Another

important method is “Static.readLcSelection” that reads a selection of load cases from

another CSV file and associates parameters to these load cases. In our example, the CSV

files are located in directory “PROJECT/DATA/CSV_LC”.

In “PROJECT/static.rb” main source file, the building of the databases and load cases is done

via the following line:

Static.setFemDirName("D:/SHARED/FERESPOST/TESTSAT/MODEL")

Static.readDbAndLcDefs("DATA/CSV_LC/DbAndLoadCases.csv")

Static.readLcSelection("DATA/CSV_LC/Selection.csv")

The details of the data are found in the CSV files.

- For dynamic load cases, that correspond in our examples to the results of a SINE

calculation, the database and the loop of load cases is defined directly in the main ruby file

“PROJECT/dynam.rb”. For example, the loop one the different frequencies look as

follows:

lcNames=["SINUS_X","SINUS_Y","SINUS_Z"]

#~ lcNames=["SINUS_X"]

resFileType="HDF"

resFileName=femDirName+"/EXEC_HDF5/sol111_ri_xyz_corners.h5"

fMin=-1.0

fMax=10000.0

#~ fMax=53.1

lcNames.each do |lcName|

DbAndLoadCases.loopOnDynamSubCases(resFileType,

resFileName,lcName,fMin,fMax,scNbrMax) do |lcNameA,lcNameB|

puts lcNameB

postList.each do |p|

begin

p.initCalcSteps()

p.calculate("Dynamic Complex")

rescue Exception => x then

printf("Failed Post object %s with ID %s' n",p.to_s,p.postID())

PrjExcept.debug(x)

end

end

DbAndLoadCases.saveMosResults(postList)

DbAndLoadCases.saveSrResults(postList)

end

end

(The trick is to provide the appropriate parameters to the

“DbAndLoadCases.loopOnDynamSubCases” iterator method.)

The idea of using CSV files to store the definition of parameters is a legacy from the excel

post-processing described in Chapter VII.4. But it is also a very effective way to define the data. It

improves the readability of data definition, and the combination of data definition in a

combination of ruby code and CSV files ensures the flexibility needed to deal with specific

cases.

Note that on of the post-processing data definition function does not involve the reading of a CSV

file: “getSandwichData” method defines all the data in ruby code. This has been done because only

one corresponding instance is created in the project. However, in more normal circumstances, it would

be advantageous to define the data in a CSV file as well.

In general, the meaning of a parameter in a CSV filedepends on the index of the column in which it

is defined. Then, it is the responsibility of user to verify that the in each CSV data line, each value is

inserted in the appropriate column, so that ruby code that interprets CSV lines fills the appropriate

parameters for the construction of each “post” object.

For the post-processing of connections, the CSV file “Interfaces.csv” that defines the data

is formatted following conventions that differ from those adopted for the other types of

post-processing criteria. Correspondingly the ruby method that reads the CSV lines and interprets

them works differently. The CSV file is characterized by the insertion of directive lines

that start with a keyword and specify how the following CSV lines must be interpreted:

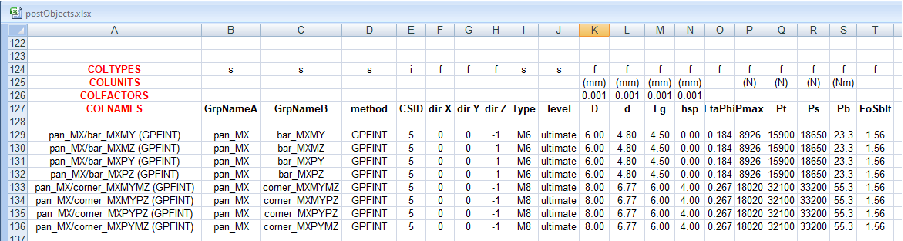

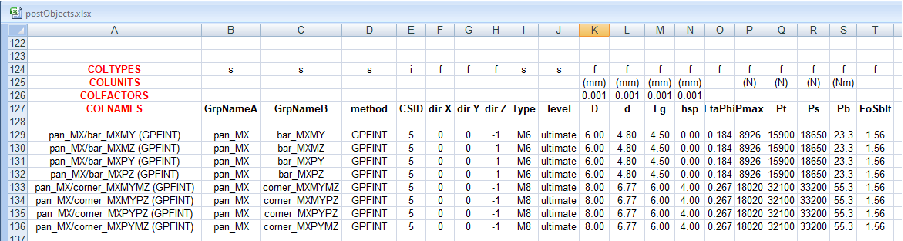

- Directive “COLTYPES” specifies the type of each column in following CSV data lines.

“s” corresponds to “string”, “i” to integer, “f” to real...

- Directive “COLNAMES” defines the name associated to each column. This name is used

to identify what each column corresponds to. It is used by ruby function to find the

different parameters needed for the building of “post” objects.

- Directive “COLUNITS” is not interpreted by the method but helps the user to remember

the units associated to each value in a CSV line.

- Directive “COLFACTORS” specifies factors applied to the corresponding real values in

data CSV lines. If Nastran calculation is done with IS units, the post-processing should

consider the same IS of units. Then, for example, if data are specified in millimeters, they

should be converted to meters in post-processing calculations.

An example of CSV lines with interpretation directives is presented in Figure IV.4.1. (Directive keywords

are coloured in red in the excel worksheet.) We observe that the factor 0.001 is always

associated to data specified in millimeters. This corresponds to the conversion of these values to

meters.

The approach for connection “post” objects construction is more flexible. It allows to consider

several formats for the different lines of a CSV file. This is particularly appropriate for the definition

of connection “post” objects, because the post-processing failure criteria may differ significantly

depending on the interface considered.